Author: K-12 AI Infrastructure Program Team

![]() Download a PDF copy of this Formative Assessment page

Download a PDF copy of this Formative Assessment page

Definition

Formative assessment is a key aspect of many applications of AI to education. Through production of public goods (as defined in the Request for Proposals), the K-12 AI Infrastructure program seeks to aid developers of AI-enabled products and services. To ground the meaning of formative assessment, we start by defining it without respect to technology:

“Practice in a classroom is formative to the extent that evidence about student achievement is elicited, interpreted, and used by teachers, learners, or their peers, to make decisions about the next steps in instruction that are likely to be better, or better founded, than the decisions they would have taken in the absence of the evidence that was elicited.” (Black & Wiliam, 2009, p. 9)

In practice, these decisions refer to the concrete instructional choices that teachers, learners, or peers make in response to evidence of learning. These decisions can range from immediate, in-the-moment adjustments such as rephrasing a question, offering a hint, or selecting a different example, to longer-term planning choices, such as revisiting a concept in the next lesson or modifying the pace of instruction. The core idea is that evidence is most useful when it changes what happens next for learners, and the changes increase learning.

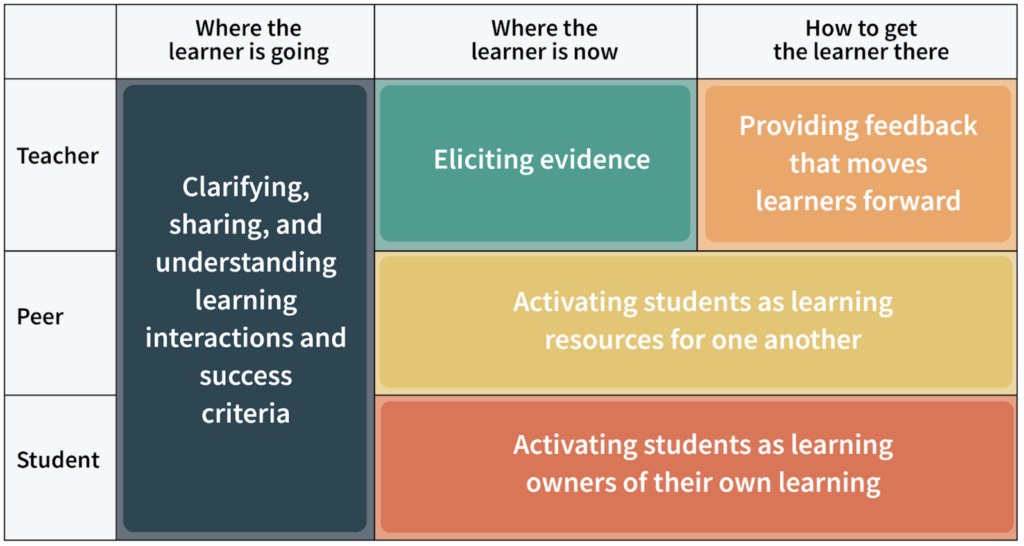

Wiliam later developed the image in Figure 1, which usefully clarifies the realm of formative assessment as including of teacher, peer, and learner roles and requiring goal clarification, eliciting evidence, and planning next steps. Below, we will elaborate on the many opportunities for AI to enhance this image

Importantly, formative assessment is not limited to testing students in the conventional sense, e.g. a quiz. It can be based on classroom observations, discussions, and examining student work. Teachers are naturally involved in multiple ways: directly interacting with students, adapting instructional resources for student use, and stimulating students’ active learning.

Figure 1: Unpacking Formative Assessment.

Similarly, “evidence” in formative assessment extends beyond academic performance to include student effort, motivation, social engagement, and other relevant strengths and assets. Paying attention to these dimensions helps teachers understand not just what students know, but how they are approaching their learning. This information can shape both instructional decisions and how teachers communicate with students.

However, gathering evidence alone is not sufficient, and it’s only formative assessment if it leads to improved instructional decision-making. This can involve decisions about how to interpret the evidence, what to talk about with students, how to talk about it, and what instructional resources and activities to use next. These resources and activities can be generated or adapted to respond to the evidence.

Formative assessment is applicable across K-12 grade levels and subject matters. In the context of AI, this means that technologies such as computer vision, automatic speech recognition, and dialogue analysis can support evidence gathering, while AI-powered tools can assist with planning, recommending resources, and adapting instruction—expanding the possibilities for formative assessment at scale.

Efficacy

Many research syntheses and meta-analyses support the claim that Formative Assessment is efficacious (e.g., Klute et al., 2017). Many educators are aware that John Hattie (2009; 2012) lists feedback and formative assessment among the most effective instructional processes. And yet, like any educational intervention, the benefits of formative assessment depend on how well it is enacted (Kingston & Nash, 2011). Quality varies, and so do impacts.

The seminal review by Black and Wiliam (1998) examined more than 250 studies and found that formative assessment can lead to substantial learning gains. However, later research using more rigorous methods found smaller, but still meaningful, benefits. Kingston and Nash (2011) reviewed K-12 studies and found that the positive effects varied by subject, with English language arts showing the strongest benefits, followed by mathematics and science. More recent reviews confirm these patterns: Lee et al. (2020) and Jiang et al. (2024) both found consistent positive effects on student learning across dozens of studies in U.S. and international settings.

How formative assessment is delivered also matters. Graham et al. (2015) found that in writing instruction, feedback from teachers produced the strongest improvements, followed by student self-assessment, peer feedback, and computer-based feedback. A comprehensive review by Lipnevich et al. (2024) synthesizing 13 prior reviews concluded that formative assessment consistently helps students learn, with no studies showing negative effects. Overall, the research indicates that well-implemented formative assessment reliably improves student outcomes, though results depend on how well it is carried out, the subject area, and the specific practices used.

Validity and Reliability

Validity means that the formative assessment, when used for its intended purpose, provides a high quality of evidence of student learning and enables adapting instruction in ways that benefit student learning (AERA, APA, & NCME, 2014). Validity is not a property of an instrument alone but of the interpretation-and-use argument that links observed student work to instructional decisions (Cronbach & Meehl, 1955; Kane, 2006). Accordingly, developers should provide validity showing that the formative assessment works for its intended purpose. At a minimum, this evidence should show that: (1) the formative assessments are well aligned to the target knowledge and skills; (2) the formative assessments actually elicit the kinds of thinking and strategies the assessment is intended to measure; (3) scoring and reporting are consistent and accurate enough to support the kinds of instructional decisions teachers will make; (4) results relate to other credible measures in expected ways; and (5) the formative assessments supports beneficial and fair instructional actions for different groups of students (Messick, 1989; AERA, APA, & NCME, 2014). Because instructional decisions depend on the stability of evidence, developers should also report the reliability/precision of scores or classifications, at a level appropriate to the intended formative decisions (AERA, APA, & NCME, 2014).

Teachers

There is substantial literature discussing the teacher professional development required for strong classroom implementations of formative assessment (e.g., Wiliam, 2018). One obvious issue is reducing the time or burden on teachers to gather evidence and adapt their instructional plans. And yet, when teachers offload formative assessment to 1:1 technologies that are directly used by students, the lack of coordination and alignment with teacher-led instruction can emerge as a major challenge. It can also be challenging to make sense of student thinking (to interpret the evidence) and to adapt instruction in ways that remain coherent with underlying curricula and yet respond to students’ specific strengths and needs. Also note that formative assessment is often integrated into more comprehensive programs of teacher professional development. For example, Cognitively-Guided Instruction (CGI) is a well-known program in mathematics; it focuses on making sense of and building on students’ mathematical strategies and problem solving approaches. In CGI, formative assessment is a component of a broader program of teacher professional development for mathematics educators.

Technology

Technology can and often is used to support formative assessment. Rigorous studies have established that this use of technology can be efficacious (Roschelle et al., 2016), with some syntheses claiming greater effect sizes for formative assessment when it is supported by technology (van der Kleij et al., 2015).

In one example, Roschelle et al. (2016), a formative assessment intervention was defined using ASSISTments software. In middle school math classrooms, teachers assigned homework problems to students in ASSISTments, and students received feedback, hints and guidance as they worked on the assignments at home. Teachers’ workload decreased because ASSISTments provided the results of the student homework in a neatly organized table. Teachers’ practice changed because they could focus on specific homework problems and wrong answers in classroom discussions (Fairman, Feng, & Roschelle, 2025). In other words, there was a modification to teachers’ next steps in instruction, teachers spent more time on fewer problems, and targeted their discussion to the evidence of what support students needed. A significant and meaningful effect size was observed, and the effect was stronger for students who were struggling in mathematics (Murphy et al., 2020). In another study, a longer term effect was observed, as well (Feng, Huang, & Collins, 2023).

The ASSISTments example is useful because it is a case where technology both supported students directly and helped their teacher. For the teacher, it helped in their preparation of formative assessment assignments, in grading, and in interpretation leading to instructional decision making. The study also demonstrated one aspect of targeted universalism: students with weaker prior performance learned more, but students with stronger performance also gained.

Learning Sciences Concepts

A wide range of established learning science concepts can be used to guide formative assessment. These research-based principles describe what effective teachers do when gathering and responding to evidence of student learning. They can be organized around three core activities (Table 1).

Table 1. Foundational Learning Sciences Concepts

| Eliciting evidence |

|

|---|---|

| Interpreting evidence |

|

| Acting on evidence |

|

Evidence-Centered Design (ECD; Mislevy et al., 2003) is a research-based framework that can be used to systematically design, develop and validate assessments. In K-12 formative assessment, the goal is to elicit evidence to make immediate instructional adjustments and guide student next steps (Black & Wiliam, 1998; Heritage, 2010). Evidence-based design applies ECD’s claim–evidence–task structure as a practical “blueprint”: specify the learning claim (what proficiency looks like), define what counts as evidence (e.g., work products, explanations, error patterns), and design scaffolds and scoring guides that reliably elicit and interpret that evidence during instruction (Mislevy et al., 2003). Crucially, ECD also makes the inference explicit by articulating why the selected evidence warrants the claim and by specifying decision rules for how different patterns of evidence trigger a particular instructional response (e.g., reteach, extend, scaffold). This strengthens formative assessment by making classroom checks (e.g., questioning routines, exit tickets, performance tasks) intentionally diagnostic and by supporting feedback that is timely, specific, and usable so the information collected is actionable for both instructional adjustments and student revisions (Shute, 2008; Wiliam, 2011). When shared as clear success criteria, the same evidence and decision rules also support students’ self-monitoring and revision during learning, and not just teacher action.

AI and the Future

In practice, formative assessment is central to most applications of AI in education. In AI tutoring, for example, the system examines evidence of what a student knows or is struggling with, plans how to respond, and delivers instruction while monitoring for improvement. While “personalized instruction” is often a vague aspiration, formative assessment offers a more precise framework for how instruction adapts to student needs based on evidence. Of course, responsible use of AI for formative assessment requires establishing that it can perform these functions with validity.

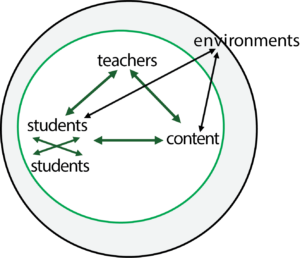

It can be useful to think about AI’s role in the context of the Instructional Triangle (Cohen, Raudenbush, & Ball, 2003), which analyzes instruction as occurring among teachers, students, and content in an environment or context. AI can potentially participate in the triangle in multiple roles, for example as a tutor, as an assistant to a teacher, as a teachable agent (cite) that allows students to express what they know, as a support for students to collaborate with each other, or in adapting content.

Figure 2. The Instructional Triangle (Cohen, et al., 2003)

The Instructional Triangle (Cohen, Raudenbush, & Ball, 2003) analyzes instruction as interactions among teachers, students, and content within an environment. AI can participate at each point of this triangle, creating opportunities for formative assessment support:

Teacher–Student interactions

- Clarifying instructional goals (e.g., as broadly given in curricular resources) and expressing what success looks like

- Reducing teacher workload associated with evidence gathering (e.g., interviewing students, analyzing their drawings for misconceptions or strategies, helping narrow down the focus for working with students)

- Strengthening teachers’ insights about their students (e.g., making sense of student reasoning, misconceptions, strengths, learning variability)

- Talking with students and looking at their work to better understand students

- Planning activities, discussions, worksheets, etc. to tune support for student learning based on the evidence

- Using formative assessment as an opportunity for teacher coaching

Student-Content interactions

- Providing high quality feedback and guidance to students

- Planning appropriate next instructional steps for students based on the evidence, e.g., optimizing their practice on a skill they are learning or retaining

Student-Student interactions

- Using evidence to create small groups around a specific learning need, and regrouping as needed as understanding changes.

- Designing collaborative small group activities that require the participation of individual students

Teacher-Content interactions

- Understanding the design of their high-quality instructional materials as they reason about their students

- Choosing or adapting their high quality instructional materials based on the evidence

Environment/Context

- Connecting formative assessments with other factors in the classroom or other educational setting, which can relate to school-wide strategies or policies (e.g., regarding homework or instructional design), other benchmark, periodic or end-of-the-year assessments, teachers’ preferred pedagogies, available or recommended instructional resources, understanding of students’ family or community setting

The K-12 Infrastructure Program, in its first RFP, asks for proposals that produce public goods that advance formative assessment while incorporating one or more of the following:

- Multimodality, including using spoken language and making sense of student-drawn images.

- Learning sciences, operationalizing known learning science principles that are supportive of formative assessment

- Educator supportiveness, including features like explainability, support for teacher co-design, support for teacher learning, etc.

See the Request for Proposals for more information.

References

Ainsworth, S. (2006). DeFT: A conceptual framework for considering learning with multiple representations. Learning and Instruction, 16(3), 183–198. https://doi.org/10.1016/j.learninstruc.2006.03.001

American Educational Research Association, American Psychological Association, & National Council on Measurement in Education. (2014). Standards for educational and psychological testing. American Educational Research Association.

Ausubel, D. P. (1968). Educational psychology: A cognitive view. Holt, Rinehart & Winston.

Beck, J. E., & Gong, Y. (2013). Wheel-spinning: Students who fail to master a skill. In H. C. Lane, K. Yacef, J. Mostow, & P. Pavlik (Eds.), Artificial intelligence in education (pp. 431–440). Springer. https://doi.org/10.1007/978-3-642-39112-5_44

Black, P., & Wiliam, D. (1998). Assessment and classroom learning. Assessment in Education: Principles, Policy & Practice, 5(1), 7–74. https://doi.org/10.1080/0969595980050102

Black, P., & Wiliam, D. (2009). Developing the theory of formative assessment. Educational Assessment, Evaluation and Accountability, 21, 5–31. https://doi.org/10.1007/s11092-008-9068-5

Chi, M. T. H. (2005). Commonsense conceptions of emergent processes: Why some misconceptions are robust. Journal of the Learning Sciences, 14(2), 161–199. https://doi.org/10.1207/s15327809jls1402_1

Cohen, D. K., Raudenbush, S. W., & Ball, D. L. (2003). Resources, instruction, and research. Educational Evaluation and Policy Analysis, 25(2), 119–142. https://doi.org/10.3102/01623737025002119

Cronbach, L. J., & Meehl, P. E. (1955). Construct validity in psychological tests. Psychological Bulletin, 52(4), 281–302. https://doi.org/10.1037/h0040957

Fairman, J. C., Feng, M., & Roschelle, J. (2025). Teachers’ use of learning analytics data from students’ online math practice assignments to better focus instruction. Digital Experiences in Mathematics Education. https://doi.org/10.1007/s40751-025-00170-3

Feng, M., Huang, C., & Collins, K. (2023). Promising long term effects of ASSISTments online math homework support. In N. Wang et al. (Eds.), Proceedings of the International Conference on Artificial Intelligence in Education (AIED 2023): Late Breaking Results (pp. 212–217). Springer Nature. https://doi.org/10.1007/978-3-031-36336-8_32

Graham, S., Hebert, M., & Harris, K. R. (2015). Formative assessment and writing: A meta-analysis. The Elementary School Journal, 115(4), 523–547. https://doi.org/10.1086/681947

Hattie, J. (2009). Visible learning: A synthesis of over 800 meta-analyses relating to achievement. Routledge.

Hattie, J. (2012). Visible learning for teachers: Maximizing impact on learning. Routledge.

Heritage, M. (2010). Formative assessment: Making it happen in the classroom. Corwin. https://doi.org/10.4135/9781452219493

Jiang, Y., Lee, H., Chung, H. Q., Zhang, Y., Abedi, J., & Warschauer, M. (2024). The impact of formative assessment on K-12 learning: A meta-analysis. Educational Research and Evaluation, 29(7–8), 423–450. https://doi.org/10.1080/13803611.2024.2363831

Kane, M. T. (2006). Validation. In R. B. Brennan (Ed.), Educational Measurement (4th ed., pp. 17-64). Westport, CT: American Council on Education/Praeger.

Kingston, N., & Nash, B. (2011). Formative assessment: A meta-analysis and a call for research. Educational Measurement: Issues and Practice, 30(4), 28–37. https://doi.org/10.1111/j.1745-3992.2011.00220.x

Klute, M., Apthorp, H., Harlacher, J., & Reale, M. (2017). Formative assessment and elementary school student academic achievement: A review of the evidence (REL 2017–259). Washington, DC: U.S. Department of Education, Institute of Education Sciences, National Center for Education Evaluation and Regional Assistance, Regional Educational Laboratory Central. Retrieved from http://ies.ed.gov/ncee/edlabs

Lee, H., Chung, H. Q., Zhang, Y., Abedi, J., & Warschauer, M. (2020). The effectiveness and features of formative assessment in US K-12 education: A systematic review. Applied Measurement in Education, 33(2), 124–140. https://doi.org/10.1080/08957347.2020.1732383

Linn, M. C. (2006). The Knowledge Integration Perspective on Learning and Instruction. In R. K. Sawyer (Ed.), The Cambridge handbook of the learning sciences (pp. 243–264). Cambridge University Press.

Lipnevich, A. A., Guo, F., & Tay, L. (2024). A systematic review of meta-analyses on the impact of formative assessment on K-12 students’ learning: Toward sustainable quality education. Sustainability, 16(17), 7826. https://doi.org/10.3390/su16177826

Messick, S. (1989). Validity. In R. L. Linn (Ed.), Educational measurement (3rd ed., pp. 13–103). Macmillan.

Mislevy, R. J., Almond, R. G., & Lukas, J. F. (2003). A brief introduction to evidence-centered design. ETS Research Report Series, 1, i-29. Educational Testing Service. https://doi.org/10.1002/j.2333-8504.2003.tb01908.x

Moll, L. C., Amanti, C., Neff, D., & Gonzalez, N. (1992). Funds of knowledge for teaching: Using a qualitative approach to connect homes and classrooms. Theory Into Practice, 31(2), 132–141. https://doi.org/10.1080/00405849209543534

Murphy, R., Roschelle, J., Feng, M., & Mason, C. A. (2020). Investigating efficacy, moderators and mediators for an online mathematics homework intervention. Journal of Research on Educational Effectiveness, 13(2), 235–270. https://doi.org/10.1080/19345747.2019.1710885

National Research Council. (2000). How people learn: Brain, mind, experience, and school: Expanded edition. National Academies Press. https://doi.org/10.17226/9853

Roschelle, J., Feng, M., Murphy, R. F., & Mason, C. A. (2016). Online mathematics homework increases student achievement. AERA Open, 2(4), 1–12. https://doi.org/10.1177/2332858416673968

Shute, V. J. (2008). Focus on formative feedback. Review of Educational Research, 78(1), 153–189. https://doi.org/10.3102/0034654307313795

Smith, J. P., diSessa, A. A., & Roschelle, J. (1994). Misconceptions reconceived: A constructivist analysis of knowledge in transition. Journal of the Learning Sciences, 3(2), 115–163. https://doi.org/10.1207/s15327809jls0302_1

van der Kleij, F. M., Feskens, R. C. W., & Eggen, T. J. H. M. (2015). Effects of feedback in a computer-based learning environment on students’ learning outcomes: A meta-analysis. Review of Educational Research, 85(4), 475–511. https://doi.org/10.3102/0034654314564881

Wiliam, D. (2018). Assessment for learning: meeting the challenge of implementation. Assessment in Education: Principles, Policy & Practice, 25(6), 682–685. https://doi.org/10.1080/0969594X.2017.1401526

Wiliam, D. (2025, September 8–9). Formative assessment in an AI world [Conference presentation]. Flourishing Learners Conference, Marvel Stadium, Melbourne, Australia.

Zimmerman, B. J. (2002). Becoming a self-regulated learner: An overview. Theory Into Practice, 41(2), 64–70. https://doi.org/10.1207/s15430421tip4102_2