Due Date: March 8, 2026 (11:59:59 PM Pacific Standard Time)

This is the first of several anticipated requests for proposals (RFPs) from the K-12 AI Infrastructure Program. Overall, the RFPs will fund teams to produce datasets, benchmarks, and/or models as public goods, intending to support improvements to multiple applications of artificial intelligence (AI) in K-12 education. This first RFP focuses on K-12 formative assessment and supports two tracks: Track 1 for proof of concept projects and Track 2 to enhance an existing asset.

Apply Here

Respond to the Request for Proposals by March 8, 2026, 11:59:59 PM Pacific Standard Time

Download a PDF version of this request for proposals (RFP)

Frequently Asked Questions

Vision

Our vision in this RFP is to produce public goods that enable a wide variety of products and services to provide high-quality formative assessment to every student, with particular attention to the needs of U.S. students furthest from opportunity, thereby accelerating student success in multiple K-12 subject areas. The public goods will be licensed resources that can be broadly used and incorporated—directly or indirectly—by developers of AI-enabled tools and infrastructure for K-12 education, in order to improve the technology products that teachers and students rely on.

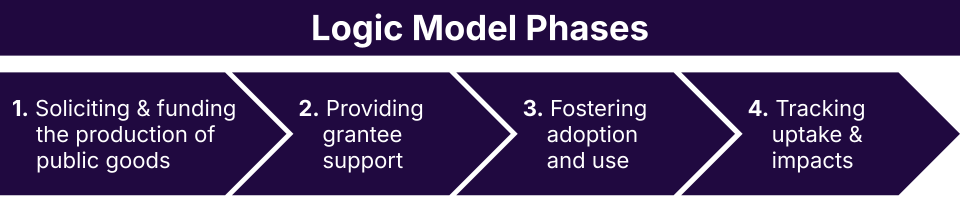

The logic model of our K-12 AI Program Team has four phases:

- Soliciting and funding the production of public goods through this RFP and subsequent RFPs.

- Providing grantee support during the period of performance to enable rapid progress towards high quality, low risk public goods and to forge fruitful relationships among the community of awardees.

- Fostering adoption and use mostly after the period of performance (though some early adopter engagement may begin during funded projects) to attract intended users to awareness and adoption of newly-available public goods.

- Tracking uptake and impacts as public goods become incorporated into a multitude of products and services, which can be via pre-trained datasets, benchmarks, Retrieval-Augmented Generation (RAG) systems, Model Context Protocol (MCP) servers, and other emergent methods that incorporate a public good into a specific product or service to improve its educational value.

This logic model reflects a theory of change in which well-designed public goods, combined with early technical support and downstream adoption efforts, lead to widespread improvements in AI-enabled formative assessment practice.

Multimodality

We see the future of AI-enhanced formative assessment as based in multimodal interactions with students and teachers. Including more modalities, such as speaking, listening, drawing, writing, etc., can enable students to better demonstrate what they know and can do, which can lead to formative assessments that are more accurate and fair. Multiple modalities can respond to learner variability of many kinds. “Multimodal” thus specifically includes spoken communication, sketches, diagrams and other visuals, and perhaps tangible interactions. We anticipate continued use of clickstream, keystroke, and textual data and look towards growing opportunities to integrate commonplace and emerging modalities to advance AI-enabled formative assessment. Note: We are not presently investing in student or classroom video, or sensor-based approaches (e.g. eye-tracking) that face challenges of practicality or heightened privacy concerns.

Principles

We further envision advancing AI-enhanced formative assessment by adhering to the following principles:

- Grounding in the learning sciences and operationalizing learning sciences constructs and techniques for (a) understanding what students know and can do and (b) designing adaptive plans for instructional next steps. There is a large, mature research literature on formative assessment and feedback research that should inform the design of technical solutions. We are investing in operationalizing research-based insights in a public good, not funding new basic or foundational learning sciences research.

- Designing for Targeted Universalism to serve every learner while intentionally attending to specific population(s) that would strongly benefit from formative assessment that is tuned to their strengths and needs (powell, 2019). Given that AI enables more flexibility of how students interact with learning resources compared to older edtech designs, the breadth of possible adaptations to address student needs and strengths is greater than in the past.

- Supporting educators’ skills, knowledge and implementation. For example, this might include performing well in explaining student evidence to educators and providing a rationale for recommendations; aligning to an educator’s curricular resources, such as their existing high quality instructional materials (HQIM); supporting educators’ role as instructional leader; and creating better opportunities for educators to increase their professional knowledge as they go. Formative assessment, overall, is a long-standing process in education; thus, much is known about how to support educators skills, knowledge and implementation. We expect proposals to be grounded in this body of knowledge.

Over the course of four years and multiple RFPs, we aim to build a collection of public goods that enables breakthroughs in the design, improvement, and implementation of AI-enabled, research-based formative assessment practices to support educators and improve student success. Within this vision, one opportunity for impact is related to validity: this program could fund deliverables that support product and service developers in establishing validity of formative assessments with respect to targeted universalism. This includes, for example, producing resources that support technical users in establishing fairness given the potential for bias. There are many validity concerns and we are looking for ways that especially address aspects of validity that lead to immediate practical applications for a wide range of product and service developers.

We encourage attention to the section associated with this RFP, Formative Assessment and Public Goods for AI in Education, for more insight into the expectations of this RFP. For more information, closely read the Evaluation Criteria 1: Significance in the Proposal Guidance section below.

Defining Public Goods

We are investing in public goods that can be adopted by a technical user (e.g., a learning engineer, data scientist, model developer, educational application developer, etc.). The public goods must be modular, foundational building blocks that lead to developing or improving educational applications for formative assessment. We anticipate public datasets being valuable in their own right, as well as public datasets that provide a foundation for creating benchmarks or improving models.

Three kinds of public goods are possible. Proposers should consider the modest amounts of funding in this first RFP as they decide what kind(s) of public good to focus on. The significance section can explain how the focal public good(s) could lead to other public goods, e.g., a dataset can be used by others to develop a benchmark or fine-tune a model, or could be used as a basis for creating additional synthetic data, etc. The three types are:

- AI Datasets: Collections of data curated and structured specifically to train, fine-tune, support, or evaluate applications of AI toward our vision. Examples may include transcripts, instructional materials, student work, student drawings, etc. We emphasize our interest in datasets explicitly designed to enable benchmarking and evaluation workflows, not only model pre-training and fine-tuning. Datasets should be appropriately annotated for use and support the development of further annotation. We also anticipate supporting advanced workflows that extend layers of annotation (e.g., additional human raters) and/or generate additional synthetic data. With regard to validity concerns, we welcome datasets that could be used to establish one or more aspects of validity. We will only fund projects that collect data responsibly, ensure that student privacy is respected, and enable responsible AI developers to evaluate if they are meeting target benchmarks and serving students.

- Evaluating AI Performance – Benchmarks and Models as Evaluators: Standardized tools, tasks, metrics, and methods used to evaluate how well AI models (or AI-powered education technologies) perform in education-specific contexts, based on metrics like accuracy, fairness, relevance to learning goals, fitness for intended purpose, or ability to predict performance on standardized and widely-used learning measures. Benchmarks are not limited to validity; in one example, benchmarking one or more aspects of how well a formative assessment process supports an educator is important. Overall, a growing collection of public-good metrics will drive improvements over time to ensure that AI is safe, valid, meaningful, and attractive to educators. We are especially interested in component dimensions of evaluation where comprehensive measures might be misleading (in particular, we do not expect a monolithic validity benchmark). For example, proposers may suggest a modular collection of related benchmarks or models as evaluators (e.g., multiple dimensions for feedback quality or another aspect of formative assessment) that others can combine and test, rather than a single omnibus rating. Benchmark projects will be expected to result in standard tasks + metrics + example data aligned to relevant dimensions (not a single pass/fail).

- Advancing AI Performance – Training Models: Machine learning algorithms that process K-12 educational data and perform tasks specific to evidence-based teaching and learning, such as identifying effective instructional practices or generating real-time feedback for students. By sharing models, innovators, researchers, developers, and others can build on the successful research of others. The program is interested in funding model-related public goods that are designed for integration into existing formative assessment applications, allow for adaptation and modification, improve domain representations (e.g., structured math representations), and produce model-building tools, pipelines, or processes that remain useful even as frontier models change. Public goods that can run locally or at low-cost or that a technical user can more easily modify for a specific educational context could be attractive (and we are aware that real educational products and services are using multiple models, so lowering costs for a targeted purpose could be valuable even where an overall product continues to use other higher-cost models as well). Models may also be a technique to provide the value of a large dataset as a public good without releasing the data itself.

We are investing in public goods that are useful to many organizations that create products and services for K-12 education. Recipients of funding will be required to license their funded public goods via a suitable license at least as permissive as Creative Commons Attribution (CC-BY-4.0) for content-like resources or Apache 2.0 for code-like resources. In all cases, recipients will not be able to exclude any type of organization from using the public good for an allowable purpose (e.g., to improve teaching and learning).

This approach does not require applicants to use the same approach to licensing for unrelated IP used parallel to these systems, nor for the release of raw data used to create these systems. In all cases, privacy, confidentiality, and ethical data standards will be applied.

For more information, see the section that discusses Evaluation Criteria 4: Release and Dissemination Plan in the Proposal Guidance section below.

Note: Proposals to develop proprietary product enhancements will be returned without review.

Assets and Capacity to Enable Rapid Progress with Privacy and other Protections

We will aim to provide funding quickly. Projects should be able to start close to June 1, 2026. Correspondingly, we are seeking teams with capacity and readiness to produce a public good. For example, we seek teams with existing datasets that require a modest amount of enhancement to become high-value public goods. We also seek teams that already have exercised the necessary processes to produce a public asset, which could include data de-identification tools and annotations, or more generally, data sharing agreements, MOUs, and privacy policies that are ready to go. Recognizing that high trust is essential for the future of AI in education, the program will only fund efforts that agree to and can confidently deliver on high standards for data safety and privacy. Assets and capabilities that enable a team to enact a targeted universalism approach will be important. Overall, we seek teams that can build on prior work to enhance or create a public good expediently yet carefully.

Grant Opportunity and Expectations

Eligibility

This opportunity is open to any U.S.-based applicants, including edtech companies, for-profit organizations, non-profit organizations or school districts. Only one application per lead organization will be accepted. Except that for universities and other organizations > 2000 staff, one application per department will be accepted. We encourage applications that involve educators or field-facing organizations that directly work with educational practitioners. Note: Partnerships among organizations are encouraged.

Tracks

A responsive proposal must address one of the following tracks:

- Track 1: Proof of Concept. Low-cost prototypes or other initial efforts that can provide evidence that the approach could result in a more broadly available public good. This track is appropriate for making initial progress on an uncertain yet highly innovative approach, and should focus on tackling key challenges rather than producing a comprehensive, large-scale public good.

- Track 2: Enhancing an Existing Asset. Short-term efforts that leverage available datasets, models or benchmarks to rapidly produce a public good, e.g, within 6-12 months. This track is appropriate for projects that conduct additional technical work to enhance an already-public dataset, to integrate new data sources into a higher-value database, or to convert an existing dataset into a resource that can be more broadly used. Released assets must meet FAIR and other guidelines to enable uptake.

In either track, an aspect of a proposal could involve releasing resources that make it easier to protect privacy, enhance security or otherwise reduce the potential for harm within a set of formative assessment-related public goods. In general, it will be considered valuable if a project both produces a public good aligned with our vision, but also releases tools that could support further enhancing that public good or creating other, similar public goods.

Budget and Period of Performance

This solicitation invites proposals for $50,000 to $250,000 for a 6-12 month period of performance. We encourage applicants to request the funds needed to do the work, not the maximum allowed; doing so will increase the number of awards that are possible and cost-efficiency will be considered in proposal review. We anticipate making 4-8 awards.

The recommended starting date is June 1, 2026.

Allowable costs include:

- Staff time, which can include buy-out time for educators who work on the project

- Consulting fees or stipends to experts and advisors (who may be located internationally)

- Equipment and computational resources, (including devices, internet access, privacy-compliant analytical applications, computing power, and storage)

- Travel where necessary to producing or disseminating the public goods

- Costs of Institutional Review Board (IRB) review, privacy oversight, legal support for data sharing agreements, etc.

- Software or tools that are essential and allocable to the public good being developed

- Subawards or subcontractors performing project-specific tasks

- Translation or accessibility services to support equity and access

- Institutional overhead up to a maximum of 15%

Unallowable costs include:

- Meals, snacks, or alcoholic beverages

- Entertainment, social, or networking events

- Lobbying or advocacy related expenses

- Pre-award costs

- International travel not directly tied to project deliverables

- Expenses that are included in institution overhead such as:

- General office supplies not directly allocable to the project

- Administrative or clerical salaries not directly tied to the project

- Capital expenditures (e.g., buildings, renovations)

- Equipment purchases over $5,000 unless pre-approved and justified

This RFP is issued as part of a multi-year, $26 million program. Over the next three years, the program will issue additional RFPs as well as more grants. These may have additional tracks and different funding levels.

When and Where to Submit

Submissions will be accepted until March 8, 2026, midnight in Pacific Standard Time (PST), on this website. You will be asked to register in order to access the application.

What to Submit

Your submission should include a completed form including an abstract, the required PDF attachment, and a separate spreadsheet for the budget. The abstract (300 word limit) will be used to select appropriate peer reviewers. Specifically, the narrative and budget should contain:

The Narrative PDF:

- Please include your abstract in this PDF as well as the form field.

- A description of your project and narrative addressing the topics above (5000 word limit). Proposers should note that while this RFP provides detailed guidance on how proposals will be evaluated, many of the guidelines can be addressed concisely. A separate paragraph for each guideline is not required.

- Supplemental materials (up to 5 pages) can include prior work or early prototypes, such as initial data samples, mock-ups, or demo items

- Resumes or CVs for the project director and any other key staff

- If additional organizations or consultants are named in the project description, short letters that they have reviewed their role and are willing to serve (e.g. similar to NSF Letters of Commitment). These letters are required for documentation purposes only and will not be reviewed by peer reviewers.

- References and Citations, not included in the word count

- Please aim for legibility and follow standard proposal formatting such as: 12pt font size for text, at least 1.15 line spacing, a minimum of 10pt font for images/tables, and 1” margins.

- With the file name convention PI LAST NAME_K12AI_RFP1_Narrative.PDF

The Budget document will be submitted as a .XLS or .XLSX file and should include:

- Budget in the template provided, along with justification.

- Link to forced copy of Google Sheet

- Link to download .XLSX file

- Download a copy of the template and save your version with the file name convention PI LAST NAME_K12AI_RFP1_BUDGET.XLS

Evaluation Criteria

Proposals will be reviewed by both peer reviewers and the program team on four equally important criteria:

- Significance: WHY the project is important to K-12 U.S students and their teachers and to the market that develops services and products for this audience.

- Assets and Capabilities: WHAT and WHO will enable project success.

- Project Workplan: HOW the project will be completed

- Release and Dissemination: OUTPUTS of the project.

An FAQ will be available on the K-12 AI Infrastructure Program website. We will also offer office hours to respond to questions about the RFP.

Proposal Guidance

For ease of reviewing, proposals should have clear sections corresponding to the evaluation criteria and following the purpose described above for each section (i.e, Why, What and Who, How, Outputs). There is no recommended or required length by section, but as described above, the total length is limited to 5000 words.

The text below will be read by reviewers as guidance for their ratings, thus writing to this guidance will be valued. Notwithstanding this proposal guidance, submissions may include additional or different information to make the best case for their effort. We provide illustrative examples in the text boxes below. Examples are NOT requirements; we welcome proposals that differ from these examples.

Evaluation Criteria 1: Significance

Proposals must target the program’s formative assessment vision, but can save space by assuming that reviewers understand the broad rationale for formative assessment.

Focus within Formative Assessment. The first page must identify the specific unmet needs, gaps, or opportunities within formative assessment that will be addressed, and provide the rationale for why the team will be able to make rapid progress in strongly addressing that specific need, gap, or opportunity. Why will these specific improvements to formative assessment move the needle? What will be the scope of the proposed public good (size, modality, subject area, grade levels)?

*Note: The remaining Significance guidelines can be addressed in any order.

Operationalizing Research. What learning sciences or other research-based concepts, methods, or insights will be incorporated into the public good?

Targeted Universalism. How will the advance enabled by the proposed public good(s) enable success for every student? Why will it make an important difference for targeted populations of students who otherwise might be underserved?

Type(s) of Public Good(s). If the effort will produce a benchmark, why will that drive the field forward and lead to impacts for millions of students? Likewise, why is producing a dataset, model, or an alternate public good the right choice? What existing resources exist and what potential benefits will this work provide?

Potential for Wide Adoption and Large-Scale Student Impacts. Which technical end-users are likely to adopt the public good, and what benefits and cost advantages will they realize? Is there evidence of demand or likely usage? Why can we expect that the set of possible adopters will actually use the proposed public good(s) in offerings that rapidly scale to large numbers of students?

Evaluation Criteria 2: Assets and Capabilities

For Track 1, describe the existing assets that can empower rapid progress on the proof-of-concept effort.

For Track 2, describe the existing asset(s) that will provide the basis for a public good. Prompts that might be helpful in creating your description include:

- What does the asset provide? Describe the existing asset.

- What is the current usage of the asset?

- Are there existing evaluations that establish the impact of the asset towards improving K-12 education?

- What makes it appropriate for the proposed public good?

- How does the asset represent the targeted universalism goals described in your Significance?

- What tools, processes, and workflows exist to support the work?

- What equipment, compute, travel, facilities and other resources would you need to conduct your project successfully? Will you have access to these resources through existing means or through use of grant funds?

- What legal agreements and policies establish the rights to release the public good?

- What types and sensitivity of data will you collect, and how will it be managed responsibly?

For both tracks, describe the sensitivity of data that will be used during the period of performance, and the data infrastructure that will be used along with any standards, certifications, etc.

For both tracks, discuss the expertise that will enable successful completion of the work as well as any available infrastructure facilities that are available to support the work.

- To what extent does your team have all the expertise necessary to execute the project successfully?

- Who has the expertise and experiences to enact the Targeted Universalism focus you described in the Significance section?

- Who has the expertise and experiences to manage any sensitive data the project will use?

- Who has expertise or experience in evaluating the education-specific value of the intended work?

- What depth of experience does your team have in producing public goods or education-focused AI resources similar to datasets, models, and benchmarks?

We encourage applicants to consider how the perspectives of educators, learners and other impacted communities are represented on your team. We encourage integrating a learning scientist with expertise on the learning science concepts that will be used, especially to ensure that those concepts are handled with fidelity.

Applicants may submit Supplemental Materials with any prior work or early prototypes, such as initial data samples, mock-ups, or demo items. They may also describe the essence of existing data sharing, IRB or other legal agreements that could be later shared with the program team for detailed review.

Evaluation Criteria 3: Project Workplan

The overarching questions for this section is: What is the justification and evidence that the workplan will confidently and safely produce the public good(s) described in the Significance section, building on what is available as described in the Assets and Capabilities section?

The workplan may begin by clearly addressing objectives: what needs to be done to produce the significance of the public goods using the assets and capabilities, both of which have already been described.

To make the argument for the workplan, proposers may cover aspects such as these in any order or combination:

- Kickoff: How a rapid start will be achieved once funding is available.

- Phases, Milestones, Timelines: Steps, phases, or workflows, showing how the work will proceed. Is adequate time available for each step? A chart is acceptable.

- Centering: How the targeted population(s) will be centered in the work.

- Contingencies: The most important contingencies for additional known risks and how they can be handled.

- Roles: For major responsibilities, is it clear who will do what? In particular, describe data management roles.

- Data Security, Privacy Protection, Quality Assurance: Funded projects must anticipate working with the K-12 AI Program Team during the period of performance to complete a data management plan and participating in data governance review and audits

Note: The workplan can end with preparing “release candidates” of public goods; final quality assurance process can be described in the next section.

Evaluation Criteria 4: Release and Dissemination Plan

Track 1 proposals should release a public good (see info for Track 2, below), but the public good will be a prototype or proof of concept and therefore will be limited in scope. An expected deliverable for a Track 1 proposal is also a memo and briefing to the program team that explains what further work would lead to fully realized public good as further funding may be available after the conclusion of the Track 1 work.

Track 2 proposals require a more thorough release and dissemination plan around a completed public good. The program team will collaborate with awarded projects on release and dissemination. At a minimum, public goods will be hosted in the program’s digital repository, which will be available at no-cost without restriction. Beyond releasing public goods, we seek to publicize and support the public goods so that they become widely known, adopted, and used to support students and educators at scale.

Public goods developed in either track must respond to FAIR Principles (Findable, Accessible, Interoperable, Re-usable). The minimum required alignment will be accomplished by working with the K-12 AI Infrastructure Program team to deposit the public goods in the Program’s repository, with a DOI, download capability and appropriate documentation. Teams can propose to go beyond this minimum, especially where this would encourage greater adoption of the public goods.

The Release plan necessarily includes:

- Quality Assurance. Describe how the project team anticipates working with the program team to review and approve public goods prior to release, to ensure quality and protections for privacy, data security, and other safety concerns.

- Licensing Terms. The program team has selected Creative Commons (by Attribution) as the recommended license for datasets and knowledge products (e.g., technical reports, research results, technical reports) and Apache v2.0 for software and code (e.g. evaluations, models, applications). Other licenses may be negotiated during the pre-award phase, but a persistent requirement is that the resources are available for commercial or non-commercial use.

- Limitations and restrictions based on data usage agreements, IRBs or ethical considerations.

- Evaluations. The project team will work with the Program’s evaluation team to produce compelling evidence of the value of the public good, compared to existing practice. In support of this, the proposal can describe how they anticipate establishing fitness for intended use, known limitations and demographic bias considerations (e.g., reporting bias and fairness metrics), validity measurements, and indications that the public good(s) can advance the field on desirable metrics.

- Documentation and Supports. The team can describe the resources that will accompany the public good and that will make adoption and use more likely.

Aspects of a compelling dissemination plan may include:

- Possible Data Competitions. To engage technical users across many organizations, the program intends to host data competitions around public goods. It would be valuable for teams to produce one or more designs for a data competition as the proposed project moves into its dissemination phase.

- Broad Promotion. The team can describe how they can directly promote the public good to an audience, such as through a webinar, conference presentation, targeted emails or posts in relevant forums, etc.

- Specific Relationships. The team may have relationships to a hyperscaler, education technology standards organization, or other partner whose incorporation of the public good may lead to large scale impacts on student success. If so, describe how those relationships could be activated.

References

powell, j. a., Menendian, S., & Ake, W. (2019). Targeted universalism: Policy & practice [Primer]. Othering & Belonging Institute, University of California, Berkeley. https://belonging.berkeley.edu/sites/default/files/targeted_universalism_primer.pdf

Past Office Hours

Targeted Universalism Office Hour Registration: Tuesday, 2/17/26 at 5PM ET/2PM PT

Public Good/Technical Office Hour Registration: Monday, 2/23/26 at 3PM ET/12PM PT

General Questions Office Hour Registration: Monday, 3/2/26 at 12PM ET/9AM PT